BERT vs GPT: The Ultimate Architecture Showdown for NLP Professionals

Comprehensive comparison of BERT vs GPT architectures for NLP. Learn when to choose bidirectional comprehension or autoregressive generation for your projects.

Two super-smart computer programs called BERT and GPT are the best at understanding human language. But which one should you choose for different jobs?

The answer isn't easy. Picking the wrong one could make your project fail or cost lots of money. Both programs changed how computers understand language using the same basic design, but they do completely different jobs and work best in different situations.

Learning about these differences helps you pick the right technology that fits exactly what you need.

The Tale of Two Transformers: Understanding the Fundamental Divide

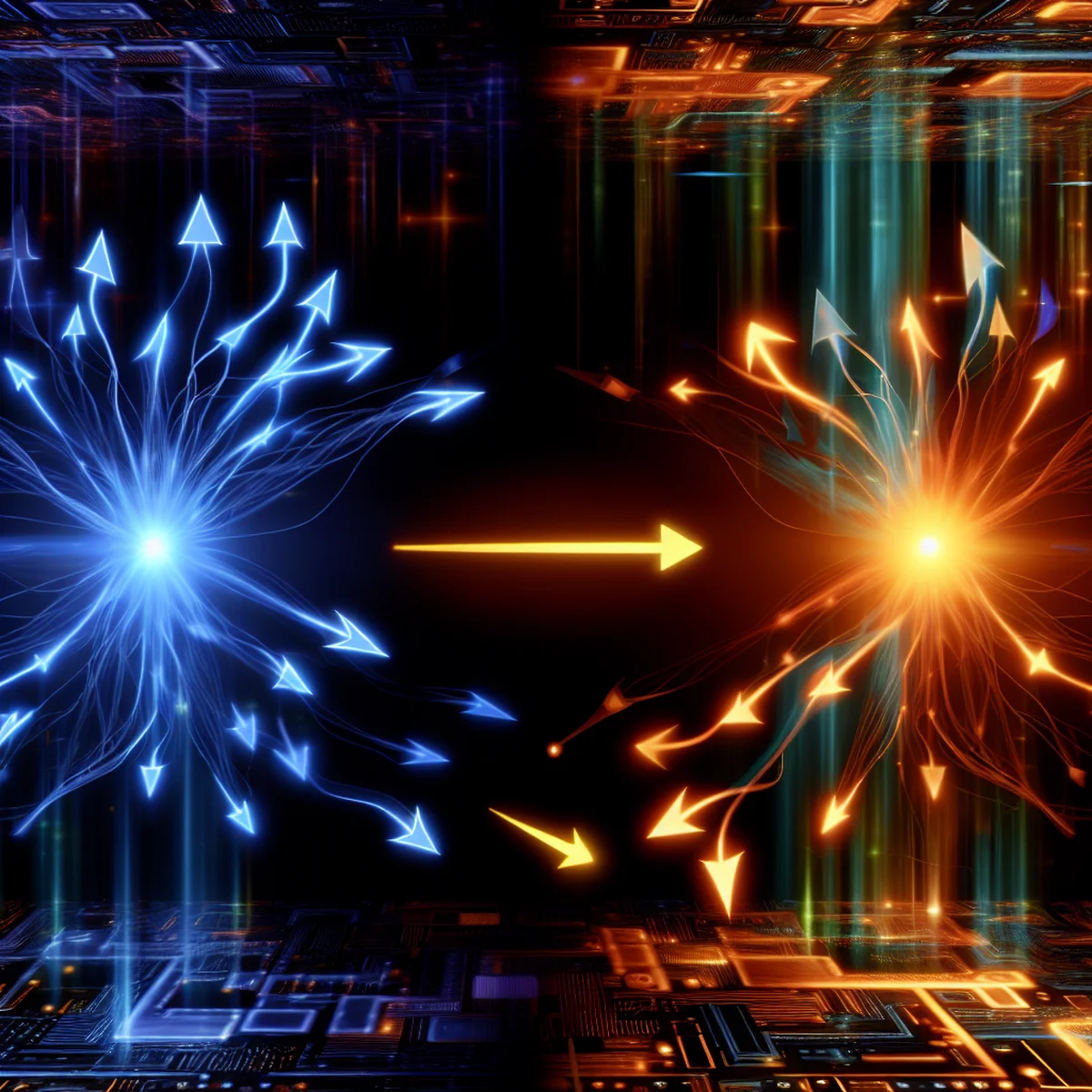

BERT and GPT are two different types of AI that both use the same basic building blocks called transformers. But they work in completely different ways.

BERT stands for Bidirectional Encoder Representations from Transformers. It was built to understand language really well by reading text in both directions at the same time. When BERT looks at a word, it checks what comes before it and what comes after it. This helps BERT understand what words really mean in different situations.

GPT stands for Generative Pre-trained Transformer. It works in a totally different way. GPT reads text from left to right, just like you do when reading a book. As it reads, it tries to guess what word should come next. This makes GPT really good at writing new text that sounds like a human wrote it.

The main difference is simple: BERT was made to understand text that already exists, while GPT was made to create brand new text.

Architecture Deep Dive: How BERT and GPT Actually Work

BERT and GPT work in very different ways. BERT uses only the encoder part of a transformer, which helps it understand text really well. When BERT reads a sentence, it looks at every word and sees how each word connects to all the other words at the same time. This helps BERT understand what the whole sentence means.

BERT can look at words that come before AND after each word, which helps it notice small details that other models might miss. GPT works the opposite way. It uses only the decoder part and has a special blocking system that stops it from seeing words that come later in a sentence.

This blocking forces GPT to learn patterns so it can guess what word should come next using only the words it has already seen. The blocking system is really important because it teaches GPT to write text one word at a time and make sentences that make sense without cheating by looking ahead.

These different ways of working make BERT and GPT good at different jobs.

Training Philosophy: The Secret Behind Each Model's Superpowers

BERT and GPT learn in different ways, which is why each one is good at different things. BERT learns by doing two main jobs: guessing hidden words and figuring out if sentences belong together. When BERT practices guessing hidden words, people cover up random words in sentences and make BERT figure out what's missing. BERT looks at all the words before and after the hidden word to make its guess. This teaches BERT how words work together and what they mean when they're next to other words. BERT also practices deciding if two sentences make sense together, which helps it understand how ideas connect.

GPT learns by trying to guess what word comes next in a sentence. People give GPT part of a sentence, and it has to figure out what word should come next. GPT does this over and over with millions of sentences until it learns how language flows and sounds natural. This training helps GPT write text that sounds like a real person wrote it.

These different ways of learning explain why BERT is great at understanding and studying text that already exists, while GPT is amazing at creating new text that makes sense and flows well.

Real-World Performance: Where Each Model Truly Shines

BERT reads text in both directions at the same time, which makes it really good at understanding what words mean. When BERT looks at people's feelings in their writing, it can read the whole sentence before deciding if someone is happy or sad. This helps it find clues that come later in the text. BERT can also read a question and a story at the same time, then pick out the exact answer from the story. It sorts emails, documents, and social media posts into different groups really well because it understands what everything means together. BERT is also great at finding names of people, places, and companies in text.

GPT works best when it needs to create new text that sounds like a real person wrote it. It can write ads, instruction manuals, and other content that keeps the same style throughout. GPT makes chatbots that talk to people in natural conversations and give helpful answers. It can also write computer code when you tell it what you want the program to do in regular words.

The Decision Matrix: Choosing Your NLP Champion

Picking between BERT and GPT depends on what you want your computer to do. Choose BERT when you need to understand, study, or find information in text that already exists. BERT is great at sorting documents, figuring out if comments are happy or angry, making sure rules are followed, and finding specific facts in writing. BERT also works perfectly when you need to understand exactly what something means, like in doctor programs or when reading important legal papers.

Pick GPT when you want to create new writing or build programs that talk with people. GPT works well for making ads automatically, helping with creative stories, building chatbots that help customers, and creating teaching tools that explain things. GPT is especially good when you need all your computer's writing to sound the same or when you want to create personal answers for lots of different people.

For really hard programs, you can use both BERT and GPT together to understand text and create new content. But this makes your program much harder to build and needs way more computer power to work.

Beyond the Basics: Limitations and Considerations

BERT and GPT need very powerful computers to work. These computers must have lots of memory and fast processors. Without these, the models run slowly and can't answer questions quickly. Training them for special jobs requires strong computers and experts who understand the technology.

GPT models have the same computer needs. The biggest versions need expensive equipment to run properly. GPT also works slower than BERT when reading because it looks at one word at a time instead of reading everything together.

Both models need extra training for special jobs. This makes them harder and more expensive to use. They work best when you give them huge amounts of information. Training them needs high-quality data that experts have checked and marked correctly.

Both BERT and GPT can learn unfair ideas from the information they studied. This creates serious problems. People must test them often and use special methods to remove these unfair ideas before using them in real situations.

Conclusion

Picking between BERT and GPT isn't about finding the best one—it's about matching what each model does well with what you need. BERT reads text in both directions at once, which makes it perfect for understanding and studying text that already exists. GPT creates text one word at a time, which makes it great at writing new content that sounds like a human wrote it. When you understand how these models work differently, you can choose the right one for your job. As AI keeps getting better, knowing these basics will help you understand new technology and make smart choices about which tools to use. The best results come from using the right tool for each task—and now you know how to pick between them.

AI-Generated Content Disclaimer

This article was researched and written by an AI agent. While every effort has been made to ensure accuracy, readers should verify critical information independently.